The Harness and the Haze: Six Reflections on Stochastic Architecture

It feels like we are at an inflection point; the capabilities of Large Language Models (LLMs) are extraordinary, offering new ways to synthesize information, automate complex cognitive tasks, and extract insights from vast unstructured datasets.

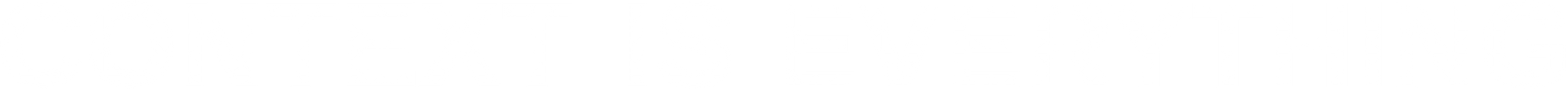

But integrating these stochastic components - these probabilistic black boxes - into systems that demand reliability, traceability, and accountability is profoundly difficult.

At AXONLORE, I am building a "System of Trust" for AI-driven analysis. My goal is to deliver Provable AI. In high-stakes environments, a plausible explanation from a machine is worthless. You need an immutable chain of evidence.

This mission demands an architecture that can harness the chaotic potential of AI while remaining grounded in the immutable facts of a knowledge graph.

The complexity here is significant.

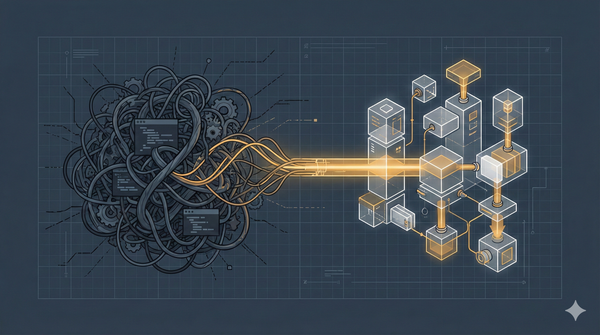

We are utilizing the database (specifically, Datomic - a technology that supports immutable facts and time-awareness) not just as the information model, but as the coordination mechanism itself. This has led to the development of sophisticated mechanisms - deterministic dataflow primitives, dynamic DAG rewriting, atomic coordination, and time-aware memoization - that could only have been reached through significant, uninterrupted thought - aka "hammock time" - reflecting on the nature of information, time, and process.

Here are six reflections on the complexity of this domain and the architectural choices required to navigate it.

1. The illusion of control

As humans we crave determinism.

As engineers, we build orchestration engines designed to execute Directed Acyclic Graphs (DAGs) with atomic precision. We define inputs, outputs, and sequences, expecting the system to comply predictably.

Then, we insert a stochastic black box - an LLM - into a stage and expect that precision to hold.

But, integrating an LLM into a deterministic system isn't about making the LLM deterministic; it's about designing a deterministic harness. The moment you invite randomness into your system, your scaffolding must become absolutely rigid. The harness is responsible for coordination, state management, error handling, and validation.

Attempting to manage a complex, LLM-driven process with simple imperative scripts or webhook chains is doomed to fail. The orchestration engine must be robust enough to handle the inherent unpredictability of the components it manages.

This is why we're embracing the use of atomic database functions (like Datomic's Compare-And-Swap) for coordination. We rely on the database as the single source of truth for the state of the execution, ensuring that even when the underlying tasks are probabilistic, the management of those tasks is not.

2. Facts, time, and the peril of caching hallucinations

LLMs are expensive and slow. Stochastic systems, therefore, demand memoization. You must cache results to achieve acceptable performance and cost efficiency.

But caching an LLM output is fraught with peril. What if the underlying facts in your knowledge graph change? A naive cache, unaware of the temporal context of the data it used, will happily return a stale lie.

Information is situated in time.

A proper architecture must acknowledge that the "correct" answer is coupled to the system's state at a specific moment. This isn't just caching; it's temporal coherence.

To achieve this, we must fingerprint inputs using the database's transaction time. When we ask the cache for a result, we are not just asking, "Have you seen this prompt before?" We are asking, "Have you seen this prompt, applied to the world as it existed at this specific point in time?"

Designing this requires understanding the epistemology of your system. How do you know what you know, and crucially, when did you know it?

3. Composition over command: the functional dataflow

If your architecture diagrams look like flowcharts, you might be building a brittle system.

Flowcharts are about control and sequence - concepts that stochastic components actively resist. When you try to dictate a rigid sequence to an inherently unpredictable process, the system fractures under the strain of edge cases and unexpected outputs.

Instead, we must design for dataflow.

We model workflows functionally: map, reduce, aggregate. We are transforming information, not dictating steps. This shift in perspective is crucial. In a functional dataflow architecture, the focus is on the data dependencies and the transformations applied to the data, rather than the order of execution.

This composability allows us to manage complexity and scale without centralized control. For example, a reduce operation shouldn't be a monolith. It should dynamically expand into a sequence of dependent reducer tasks at runtime based on the shape of the data it receives. Thinking functionally about parallel, probabilistic processes takes significant effort, but it yields systems that are far more resilient and adaptable.

4. The necessity of an immutable ledger

In high-stakes environments, when an AI-driven system makes a decision or generates an analysis, you must know why. You must be able to defend that decision to regulators, stakeholders, or auditors.

This requires more than logs. Logs are transient and often lack the necessary context. To achieve true traceability, you require an immutable, accretive execution ledger.

This ledger must capture everything: the exact inputs, the precise function or prompt executed, the raw outputs, the duration, and the subsequent interpretation.

If you cannot replay and interrogate every probabilistic decision your system makes, you are flying blind. If your system is built on a mutable database, reconstructing the past is an exercise in forensic accounting. Utilizing technologies that give us this history inherently is key.

I don't see an execution ledger as an afterthought; I believe it is a prerequisite for building trustworthy AI systems.

5. Dynamic adaptation vs. static plans

A static workflow definition is a plan made when you know the least.

Traditional orchestration engines rely on predefined DAGs. But agentic systems, by definition, adapt. The optimal path forward often depends on the results of the previous stochastic step. The system must be able to observe its own output and adjust its plan accordingly.

The orchestration engine must support dynamic stages guided by "assessors". After a task runs, an assessor (which we're modelling as deterministic, imperative code) evaluates the output and determines the continuation. This might involve looping back, skipping steps, or even dynamically rewriting the workflow DAG at runtime.

This is how you bridge the gap between predefined orchestration and autonomous behavior. It allows the system to handle complexity and uncertainty without requiring the human operator to pre-program every possible contingency. Designing the rules for this adaptation requires profound clarity about the problem domain, not just the technology.

6. Isolating the plumbing

We often complect (intertwine) the core logic of a task (the prompt engineering, the tool definitions, the business rules) with the accidental complexity of its execution (error handling, retries, timing, validation, logging).

This entanglement guarantees rigidity. When the core logic is polluted with infrastructure concerns, it becomes difficult to reason about, test, and evolve.

If you want a system that can adapt, you must separate these concerns.

We achieve this through the use of interceptor chains - middleware wrapping the execution of every task. Interceptors provide a mechanism to inject cross-cutting "plumbing" (like automated retries with exponential backoff, output validation against a schema, or detailed tracing) without polluting the core logic.

This separation allows the business logic to remain pure and focused on the domain problem, while the infrastructure concerns are handled orthogonally. Choosing where the seams are, and what belongs in an interceptor versus the task itself, is not trivial, but it is essential for long-term maintainability.

The path forward

I believe that building systems that are both intelligent and trustworthy requires a synthesis of decades-old principles - functional programming, immutable data structures, dataflow architecture - with the newest advances in machine learning.

It requires recognizing that the harness must be stronger than the force it seeks to contain.

And above all, it requires the space and time to step back from the keyboard and think.